Planner Overview

In the Overview and Quick Start Guide, you learned how Bixby turns natural language input into a structured intent. This guide discusses how Bixby starts with the intent, and using your models, dynamically generates a program.

Models and Intents

As you've seen, two aspects of programming for Bixby make it different from other platforms:

Bixby uses declarative programming, rather than imperative. Instead of writing an explicit procedure to arrive at a goal (for example, booking a dinner reservation), you declare the possible input values in detail, and declare the desired output. In Bixby, these declarations are defined in models, the concepts and actions that you've already seen. Concepts describe entities: inputs, outputs, and their relations to one another. They might represent recipes, ingredients, airports, or airline reservations. Actions describe capabilities: functions that relate concepts to goals. Actions might find recipes, get nutrition information, or book flights between airports. Actions are comprised of three parts: the model, which describes inputs and outputs; either a JavaScript function in your capsule that actually implements the action, or a web service that implements it; and an endpoint that connects the model to its implementation (whether local or remote).

Rather than you, the developer, composing functions in a program to reach a goal, Bixby uses the defined models and intents derived from the user's natural language input to build the program on its own. The intent starts with concepts (such as a departure airport, an arrival airport, and a departure date) and ends with a goal that satisfy those conditions (a list of flights).

Like models, intents can be expressed in the *.bxb format. When you run the Dice capsule from the quick start guide and give it the natural language request, "roll 2 6-sided dice", Bixby converts that to the following intent:

intent {

goal { viv.dice.RollResult }

value { viv.dice.NumDice (2) }

value { viv.dice.NumSides (6) }

}The Execution Graph

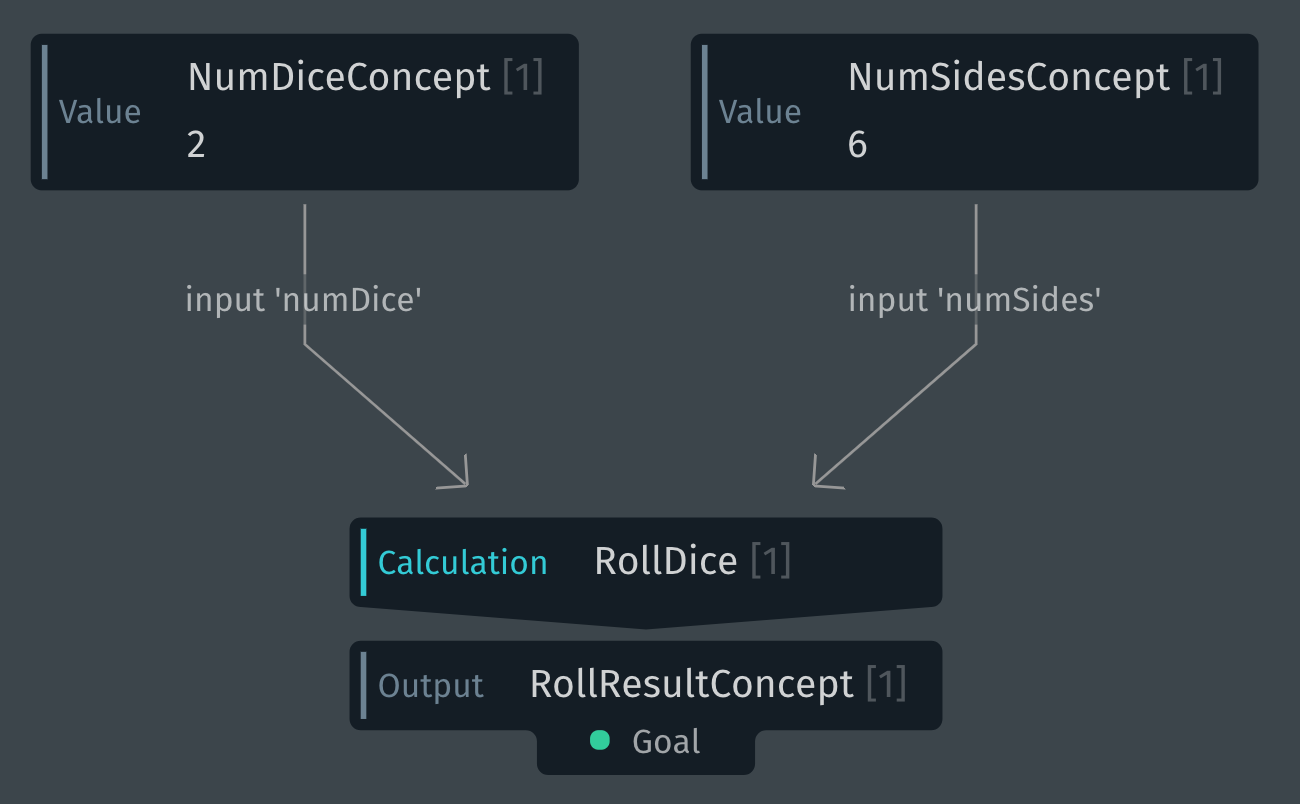

In the intent shown above, the goal is a RollResult concept, and the two input values are a NumDice concept and a NumSides concept. To get from the inputs to the desired goal, Bixby needs to create a plan that applies one or more actions to the supplied input values that will produce output that meets the desired goal.

In the Dice capsule, the RollDice action has two required inputs, a NumSides concept and a NumDice concept, and outputs a RollResult concept. So Bixby creates a plan that satisfies that intent by using RollDice:

Bixby's Planner creates a directed graph. The nodes of the graph are the models (the concepts and actions) that you have created for your capsule. The Planner dynamically generates a program by constructing an efficient graph that starts with the user-provided inputs and ends with the goal.

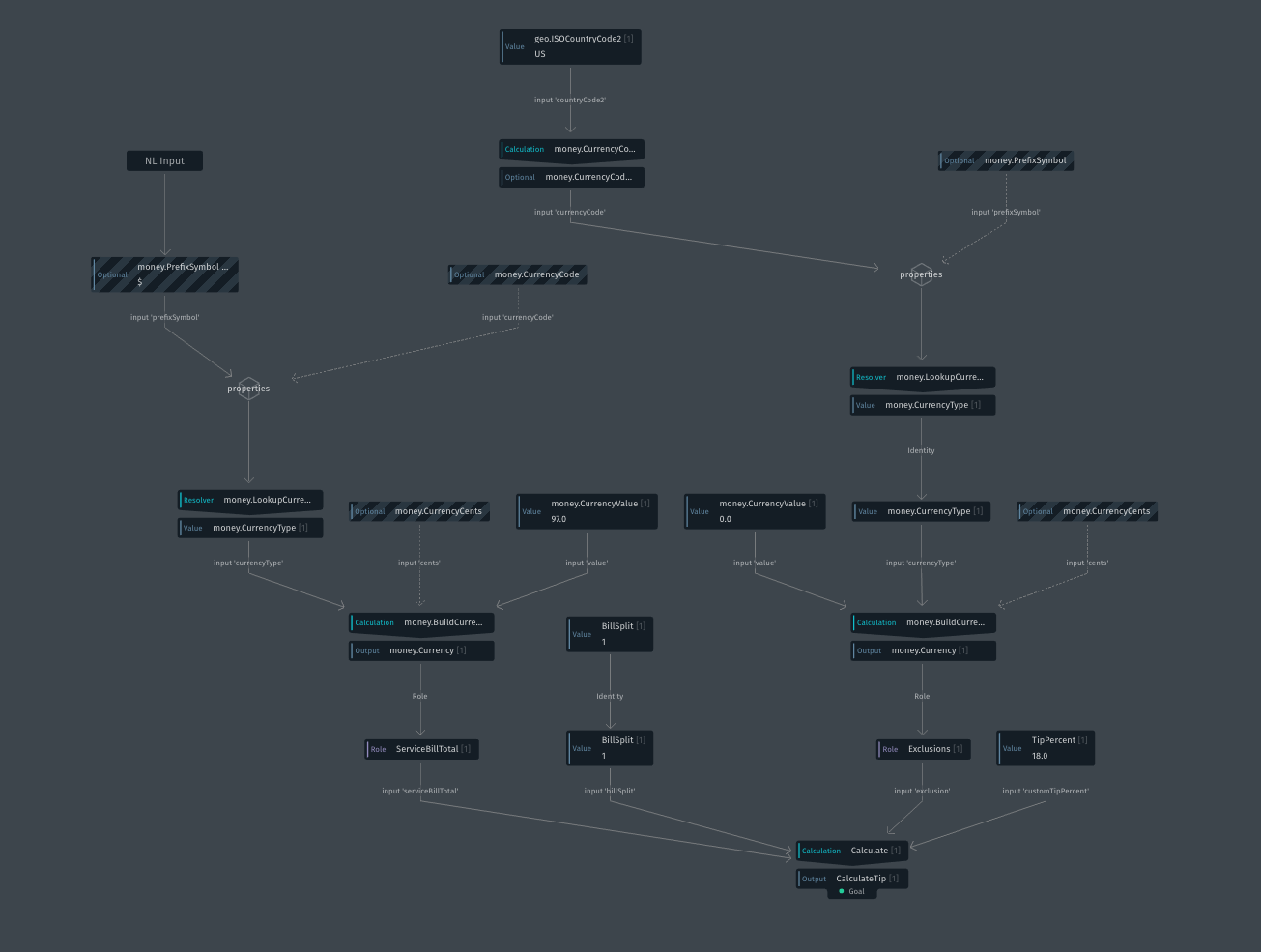

While constructing the graph for the dice-rolling capsule is pretty simple, the graph might be more complex for more complex actions. Consider a tip calculator: the user asks Bixby, "what's an 18% tip on a $97 bill".

To answer this query, the Planner creates this graph:

The user's input values are mapped (by training) to the concepts gratuity.TipPercent, money.PrefixSymbol, and money.CurrencyValue. To satisfy the goal of CalculateTip, Bixby needs values for the ServiceBillTotal, BillSplit (how many ways the bill is being split), any Exclusions (amounts that aren't part of the tip calculation), and the desired tip amount. Bixby's planner combined the money.PrefixSymbol and money.CurrencyValue primitive concepts into the ServiceBillTotal structure concept, and used default values for BillSplit (a value of 1, so the bill is not split) and Exclusions (no exclusions).

After the graph is created, Bixby executes the program by traversing the graph, performing one action at at time. When required inputs are missing values, Bixby might prompt the user for more information. It might fill the inputs based on context (such as the user's current location, or the current time and date). If your capsule utilizes Selection Learning, it might select values based on what it's previously learned about the user's preferences (such as preferred tip percentage). The graph with all required values filled in is Bixby's execution graph for the intent; the execution graph is displayed in the Bixby Developer Studio's debugger after a query is run in the Simulator. The debugger also can show the intent used by Bixby to create and execute the graph:

intent {

goal {

viv.gratuity.CalculateTip {

viv.gratuity.CalculateTip

}

}

subplan {

goal { viv.gratuity.ServiceBillTotal }

value { viv.money.PrefixSymbol ($) }

value { viv.money.CurrencyValue (97.0) }

}

value { viv.gratuity.TipPercent (18.0) }

}The order in which the Planner executes each action will affect your capsule's user experience. If information is missing and can't be supplied from context or from learning, the order in which the user is prompted for the missing values might matter to you. For example, if the user's request in a flight booking capsule is "book me a flight to Chicago", you should first ask when the user wants to leave, not when the user wants to return. Also, be aware that the user might be prompted to provide information for models that you did not create, but have been imported from other capsules and used by Bixby in the execution graph.

If your capsule's behavior isn't what you expect, your first course of action should be to look at the generated execution graph in the debugger, and see if it's reasonable and behaving the way you expect.

Keep in mind that when Bixby receives a user request, it attempts to identify a relevant capsule or gives low confidence if no capsules are relevant (such as if the user gives a random or garbage request). Once a capsule is chosen, Bixby then tries to identify the most relevant goal for the utterance. If there is only one goal in your capsule, then that is considered the best goal. You are restricted to testing only your capsule, so strive to ensure your capsule addresses all of the utterances for your use cases. While testing, you should not have to worry about extraneous utterances matching your capsule as those will not be the case with your users.